What is glu?¶

glu is a free/open source deployment and monitoring automation platform.

What problems does glu solve?¶

glu is solving the following problems:

- deploy (and monitor) applications to an arbitrary large set of nodes:

- efficiently

- with minimum/no human interaction

- securely

- in a reproducible manner

- ensure consistency over time (prevent drifting)

- detect and troubleshoot quickly when problems arise

How does it work?¶

glu takes a very declarative approach, in which you describe/model what you want, and glu can then:

- compute the set of actions to deploy/upgrade your applications

- ensure that it remains consistent over time

- detect and alert you when there is a mismatch

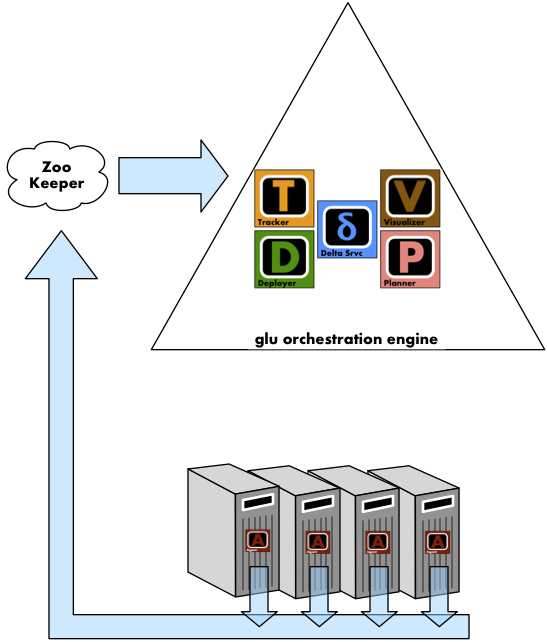

The following diagram represents a system with the various components (which will be defined in greater details later):

- the nodes/hosts (bottom of the diagram) represent where applications will be deployed on

- a glu agent

is running on each of those nodes

is running on each of those nodes - ZooKeeper is used to maintain the live state as reported by the glu agents (blue arrows)

- the glu orchestration engine is the heart of the system

1. You define a model¶

The model is a json document in which you declare what/how/where to deploy an application:

{

"fabric": "prod-chicago",

"entries": [

{

"agent": "node01.prod",

"mountPoint": "/search/i001",

"script": "http://repository.prod/scripts/webapp-deploy-1.0.0.groovy",

"initParameters": {

"container": {

"skeleton": "http://repository.prod/tgzs/jetty-7.2.2.v20101205.tgz",

"config": "http://repository.prod/configs/search-container-config-2.1.0.json",

"port": 8080,

},

"webapp": {

"war": "http://repository.prod/wars/search-2.1.0.war",

"contextPath": "/",

"config": "http://repository.prod/configs/search-config-2.1.0.json"

}

}

}

]

}

- where to deploy it: agent and mountPoint (unique key)

- how to deploy it: script

- what to deploy: initParameters (which is a map made available to the script)

Tip

If you want to achieve reproducibility, the model should properly be versionned in a source control management system like git or svn.

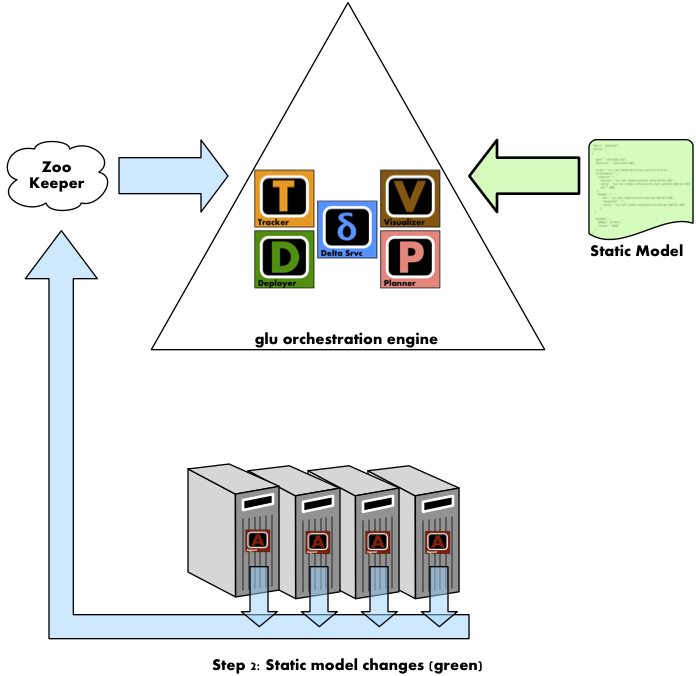

2. You load the model in the glu orchestration engine¶

You load the (previously defined) model in the glu orchestration engine which:

- compares the model you defined (desired state) with what is currently deployed (live state)

- generates a deployment plan which consists of a set of commands to run (only in the event that there is a difference between the 2 states).

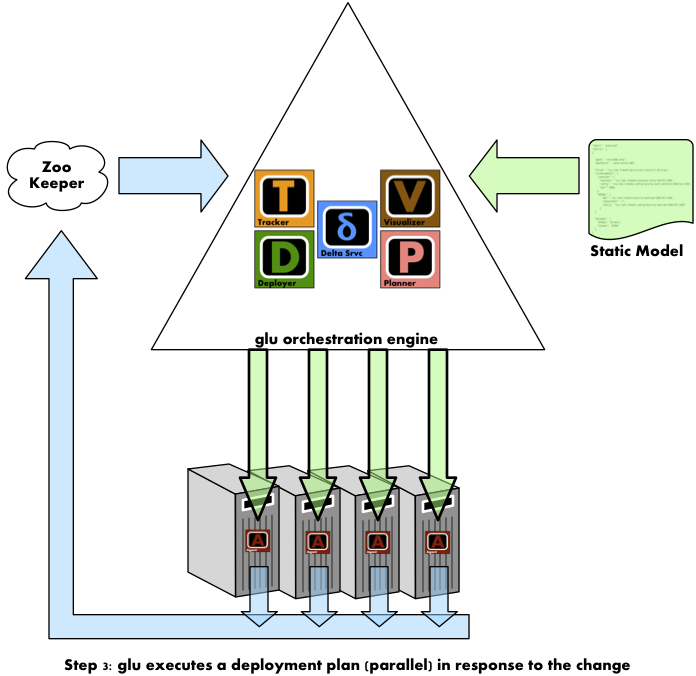

3. You tell glu to execute the deployment plan¶

Note

It is important to note that glu will never do anything without your explicit approval: after you load the model in the orchestration engine, you must instruct glu to actually perform the operations (after you have had a chance to review them).

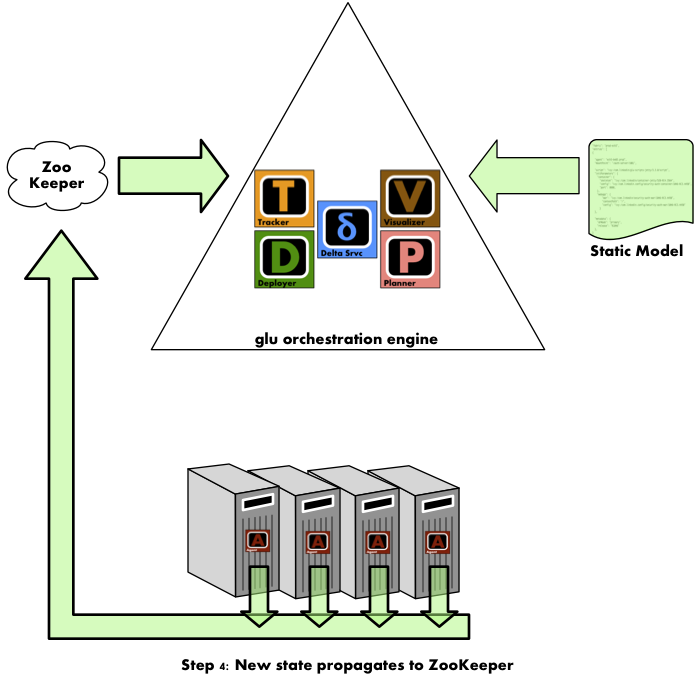

4. The glu agent executes the instructions and updates the state¶

The glu agent then follows the instructions coming from the glu orchestration engine (over a secure HTTP/REST channel). It then propagates the new state to ZooKeeper which in turns makes it back to the orchestration engine.

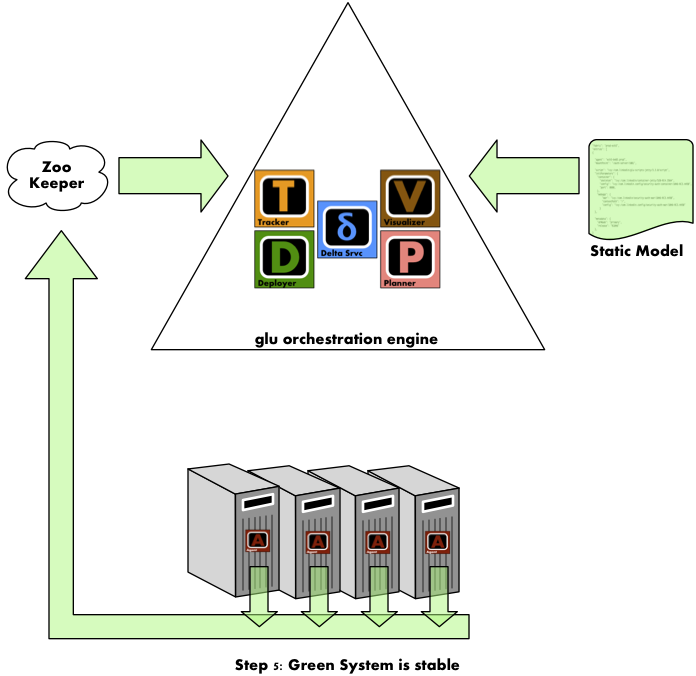

5. The system is stable¶

The desired state (coming from the static model) and the live state (computed from ZooKeeper) are now the same: the system is stable.

The system will remain stable until something happens on either side:

- a new (different) model is loaded in the glu orchestration engine

- the live state changes because for example a machine or application went down

Key Components¶

Agent¶

The glu agent runs on every node/host in the system and is responsible for:

- listening to the glu orchestration engine (through a secure REST api).

- running glu scripts (the script entry defined in the model) which defines what it means to deploy and monitor an application.

- reporting its state to ZooKeeper.

Model¶

The model is a json document which describes:

- which applications need to run

- on which hosts

- how to deploy and monitor them (through a glu script).

This document is typically properly version controlled in an scm (source control management).

glu script¶

A glu script is a set of instructions decribing how to deploy and run an application. Typically there is one glu script per type of application (for example, there is a glu script that describes how to deploy and run a webapp in a jetty container, another one that describes how to deploy and run memcache, etc...). The glu script runs in the agent on the target host and is parameterized by the init parameters found in the model.

Orchestration engine¶

The orchestration engine is a separate process responsible for:

- listening to the agent updates (through ZooKeeper) to build the live state

- compare the live state with the desired state (the model)

- generate the delta for visualization and deployment plan

- orchestrate the execution of the deployment plan accross the nodes (in parallel or sequentially)

Note

Currently the orchestration engine is bundled inside the console (which is a webapp).

Console¶

The console is a web application that allows you to control glu using a web browser.

Here is a list of key features offered by the console:

- user authentication and management (ldap or console password)

- auditing (to keep track of who does what and when)

- access to all agents functionalities (like viewing log files and displaying folders, killing processes…)

- configurable to suit your needs in terms of what gets displayed and in which order

- parallel deployment accross any kinds of node

- powerful filtering capabilities (allow to create notions like cluster for example)

Is glu really working?¶

glu is not an academic exercise. glu has been built and successfully deployed at LinkedIn in early 2010 and then released as open source in November 2010. glu helps LinkedIn manage the complexity of releasing hundreds of applications/services on a 1000+ node environment (as of December 2010, LinkedIn had 4 different environments, from a small integration environment to 2 large production environments).